SIF1 video compression format specification

This document, like other similar specifications, describes only decoding algorithm. Also, perhaps, some issues related to encoding will be described in annex to this document. Material presentation level of this specification is chosen with the expectation that the reader is familiar with general principles of SIF conversion, that are described in published patent.

General decoder structure and description of used algorithms.

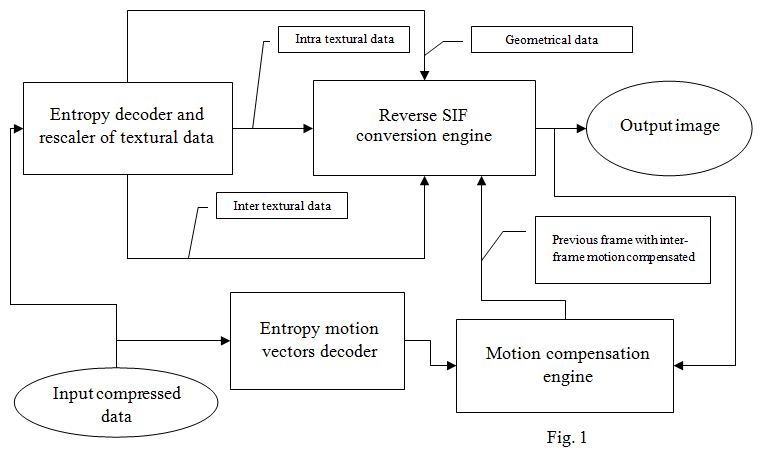

General decoder structure is traditional and rather simple. In some parts it even simpler than MPEG1 decoder structure. At the same time, through the use of a number of unique algorithms in each functional decoder unit, very high compression efficiency achieved. General decoder structure is shown on Fig. 1.

Input compressed data is fed to two independent entropy decoding engines.

The first decoder decodes and rescales (inverse quantizes) input intra and inter textural data, and also decodes geometry control data. Geometric data contains information about decomposition of the compressed images on the resampling patterns, and texture data represents as one-dimensional arrays of decoded subsamples of these patterns. All three of the above types of data are used by the reverse SIF conversion engine for restoring compressed image. Image of the previous frame in which inter-frame motion was compensated using motion compensation engine is also used for the recovery of inter-coded resampling patterns.

The second decoder decodes motion vectors data, that are needed for motion compensation engine operation.

Thus, the decoder has four main functional units that implements four basic decoder algorithms. Herewith, two entropy decoder uses a number of unified algorithms.

First let's see these common algorithms:

- A uniform range coder. Code of this encoder is a modification of the public property code written by Eugene Shelvin and differs from it in a reduced precision integer arithmetic. This encoder can be implemented on any hardware that supports hardware multiplication of two 16-bit numbers.

- Unified exclusive algorithm of fast binary PPM (Prediction by Partial Matching) encoding with inheritance between different order contexts mechanism.

- Several context-applied bPPM codecs with contexts of rather high order. In some cases the order comes up to 3. However, the size of each context is small and it usually doesn't exceed 16, that is 4 binary bits. Such algorithm was chosen because the decoder doesn't use a predetermined table of probabilities, as it done in the CABAC encoder of H.264 standard. Instead, the algorithm is optimized for the fastest statistics gaining at the beginning of encoding.

- Input data binarization is usually performed using an algorithm of sign, mantissa and exponent allocation from number with encoding each of this components by individual bPPM codecs.

- Two algorithms of entropy model weighting coefficients adaptation – fast, for established context and slow, more accurate, for new unstable contexts.

- Entropy model state resets only with encoding of each keyframe. It lowers stability of the codec to errors in the transmission channel, but increases the efficiency of the entropy codec. Implementation of other entropy codecs modes, that are more resistant to broadcast through a noisy communication channels is also possible in the future.

Now let's turn to the individual features of each major functional unit.

Entropy decoder and inverse quantizer of textural data uses the following individual algorithms:

- Current controlled geometric data is used as one of the contexts in bPPM texture data codecs.

- Individual baseline quantization threshold is decoded for each group of 32 texture samples.

- For each individual texture sample baseline quantization threshold is recalculated into an individual quantization threshold, which depends on the sample position in a hierarchical tree structure of geometric data.

- Quantized texture data rescales with a nonlinear, psycho-visual adapted, inverse quantization tabular algorithm.

Entropy motion vector decoder uses the following individual algorithms:

- Block algorithm of motion vectors representation with variable block size.

- Size of the block described by a single motion vector could vary from 64x64 pixels to 4x4 pixels. Herewith half height or width rectangular blocks are used. That means that all possible configuration blocks in a row from 64x64, 32x64, 64x32 … to … 8x8, 4x8, 8x4, 4x4 are supported.

- A tree structure is used for encoding of motion vectors blocks location. It is more efficient than usual quadtree (that is used in H.264 standard, for example).

- Very large motion vectors with size up to 16384 for each coordinate are supported.

- Adaptive selection of the predictor's type is used for each encoded motion vectors.

In general we can say that coding of motion vectors in SIF1 is more effective than in H.264, that means that relative size of motion vectors in SIF1 output stream is less than in H.264 output stream.

Motion compensation engine has the following unique features:

- Adaptive interpolation filters with switchable length are used for calculating of subpixel shifts.

- Two adaptive switching algorithm are using for removing sharp differences on the block boundaries, that are result of the block motion compensation that lead to degradation of image visual quality.

- The first algorithm is a standard algorithm for overlapped block motion compensation. However, its feature is using small overlapping radius. This algorithm is applied in cases when the second algorithm is not effective.

- The second algorithm is a unique algorithm which uses a special pre-post conversion at the blocks boundary. Its advantage is that it allows to get sufficiently high efficiency in removing sharp differences on the block boundaries with a very small degree of overlap between blocks, and, accordingly, without the need of calculating "extra" sub-pixel shifts for overlapping areas. It provides high performance of motion compensation engine and its increased efficiency.

- Half-sized blocks, with a minimum size of 2x2 pixels, are used for chroma motion compensation.

- Originally, subpixel interpolation algorithm was developed for qpel interpolation, but only hpel interpolation filters are now implemented. Interpolation algorithm will be fully implemented in the near future.

In general we can say that a number of unique algorithms are implemented in the motion compensation engine. Application of these algorithms solved problem of efficient motion compensation for such algorithm, that doesn't have separate independent conversion blocks, as SIF.

SIF conversion engine has the following features:

- A tree structure in the form of embedded quadtree is used for geometric data representation. It is approximately equivalent to the structure described in the patent. Now this is not the most efficient tree structure that is possible, but that "historically" turned.

- 4 levels of base Laplace pyramid decimation, which corresponds to the size of the base, the lowest frequency image at 1/16 horizontally and vertically relative to the original image, are used for luminance.

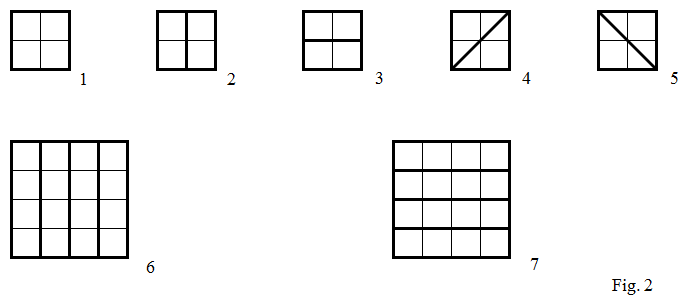

- Total seven basic oversampling patterns. Five of them have a base in the non-overlapping interpolation of a 2x2 pixel, and two have a base of a 4x4 pixel. Graphically non-overlapping parts of these patterns are shown on Fig. 2.

Thus, the first pattern consists of a simple single subsample from a lower level of the Laplace pyramid. Patterns from 2 to 5 represent an extrapolation of vertical, horizontal or two different diagonal directions drop, respectively. Each of these patterns consists of 2 subsamples. Finally, two large patterns on the 4x4 matrix also represent a simple extrapolation of the horizontal and vertical drops, but more extensive than the 2 and 3 patterns. Two last patterns consists of 4 subsamples each.

- In my opinion, diagonal patterns are made quite badly. Now I would have made them differently. In real coding, they rarely work. They help only with compression of diagonal drops at an angle close to 45 degrees, which is rare.

- It is easy to see that current patterns extrapolate ordinary local differences of brightness. If we consider that by using only first 3 patterns SIF codec is similar to classical wavelet codecs, we can assume that in current SIF algorithm only the most simple, basic functionality is implemented.

- Individual adaptive correction interpolation filters with overlap are used for each pattern. This is accompanied by adaptive switching between the two - long or short versions of the correction filter.

- When interframe compression current pattern coding mode (inter or intra) can be chosen for each node of embedded quadtree. That is for every square with a maximum size of 16x16 pixels and a minimum size of 2x2 pixels.

- In the interframe encoding mode, there is another hidden pattern - so-called blank pattern. It represents a square area, similar to the first pattern, but for which subsample is not encoded and transmitted to the decoder as for this area inter-frame difference is considered to be close to zero. A special simplified filter for removal of the possible artifact with square boundaries is used for such area.

This text is under «Creative Commons Attribution 3.0 Unported» license.

This text is under «Creative Commons Attribution 3.0 Unported» license.

SIF1 specification license

|